Introduction

This paper is less a guide to writing a test strategy and more a drive along the country lanes I followed as I tried to solve the problem of how to write a generic test strategy for a non-homogenous organisation.

I work for a medium sized IT company where we deliver both products and projects, from very large customisation of CRM systems with multiple integrations to small web site development, where the site mainly consists of pictures and text and very little in terms of functionality, and everything in between: data warehousing, financial systems, even some embedded. We have both clean Agile, modified agile and all the way back to traditional waterfall projects.

Part of my task of setting up a “test centre of excellence” is to design a test strategy which is one size fits all; without it being so high level as to be meaningless and not at all useful for the testers and development team. At the same time I am trying to deliver a message to the customer that we care about and take care of testing and quality but without it just being a marketing exercise.

I was feeling a little ‘mission impossible’. Then when I found a definition of test strategy as “Teststrategin beskriver hur testning normalt utförs inom området” then I knew I was doomed!

In the beginning

What I was pretty sure about was that in terms of the v-model the one test level that was mandatory was unit testing, written by the developers. It also seemed that this would hold whatever the type of project or development technique. So that went in directly. But what I couldn’t say was how this would be done and I definitely couldn’t try to enforce TDD; for some groups this was a black hole, for others this was a red flag and we even had a group for which the concept was green; that is the methodology was both applicable and applied. So we have a step in the company wide test strategy that unit tests should always be written and maintained. I also had a secret strategy of helping development teams work towards continuous integration, and educating project managers in the basic concepts of TDD, and trying to find good arguments for project managers to insist on unit test maintenance in the face of delivery deadlines. I believe this is called a test strategy of being sneaky!

At the end

We needed to know what success looked like, in terms of testing and in terms of releasing the product which means that I was pretty sure that I wanted acceptance testing in there, but I got stuck quicker than I thought when I realised that although in projects it was easy to talk about user acceptance testing in the product development areas there was no single customer to do the accepting. But no panic, I just needed a little bit of readjustment of focus from the external customer to the internal customer. I found that focussing on the concept of ‘Definition of Done’ was very useful in many teams, and just talking about acceptance criteria with the more traditional bunches also worked. So we have a test strategy that also covers the end of the development cycle. It was just that I couldn’t produce any detailed text that covered the whole spectrum.

Now is where I admit that I had another secret strategy and that was to have as small (but effective) amount of text as possible. So the company wide strategy is that we should agree the best way to set acceptance criteria for the project/product in question and then actually implement that. And just before you all start commenting: I am well aware that this task actually has to be started at the beginning of the development cycle instead of all at the end.

The middle

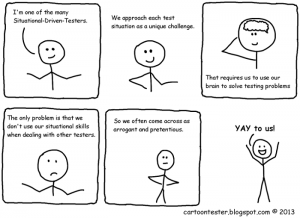

So in the middle of the development cycle what is the best test strategy? I started by thinking HOW do we test? That answer was ‘it depends’; it depends on what we are testing, what the team culture is, how long we have, what kind of tester there is, what skills and tools are available etc. How on earth do we design a generic strategy that covers all of that? Our strategy seemed to be, ‘do whatever seems to be suitable at the time’, or to make it sound a little better our strategy is to THINK!

Around this time I learned a new word ‘heuristic’ (Oxford dictionary defines this as “Proceeding to a solution by trial and error or by rules that are only loosely defined.”) which quickly led me to James Bach’ heuristic test strategy model, which is basically a very comprehensive checklist of everything that you should consider when creating your test strategy (http://www.satisfice.com/tools/htsm.pdf). Great list but doesn’t get me very far when trying to be generic and still meaningful, but not too much text (let’s face it for most of us test managers we are pretty sure no one actually reads these documents they just want us to get on with it and test!)

Anyway, I checked out plenty of lists and web sites and papers and came up with my own list of the absolute minimum a tester needs to have:

- A test environment

Seriously that was it. I already had acceptance criteria in there and ‘Thinking’, so now I can survive as a tester. However this short list is just not very impressive. So I added some points that would be good to have if there was time and budget:

- A tool for dealing with the defect reports, preferably not Excel

- A tool for managing the test cases and planning the test runs, as these tools are often expensive then I can live with Excel quite a long time

But for me these two points aren’t a strategy they are just tools to make my life easier, and anyway we have a large variation of tools being used across this company and I can’t dictate just one. I can only recommend and make suggestions. But still I added:

- A process for change control,

and this isn’t actually pure test, I just like to have this one clearly defined and in place to make life easier when arguing about what goes in the next release.

But I still had a nagging feeling. I had checked out quite a few test strategy templates while I was surfing and I finally realised what the problem was; a total confusion between strategy, tactics and proscribed methods. These are not the same thing (one explanation http://smallbusiness.chron.com/differences-between-strategy-plans-tactics-23239.html is fairly clear) and yet the strategy test templates I saw had been cramming it all in. Then to make it worse some experts are not even in agreement over what a plan is. I am pretty clear in my head that a plan is about resources and timetables. All that stuff about where the test environment is and who controls it, and what priority definitions we are using is nothing to do with planning or strategy, this is just methods and tactics. The strategy is that we should have a test management tool which helps us plan and keep track of test execution, the tactic/method is which tool and how we choose to configure it.

So now I made a decision that I needed three points on my Powerpoint slide and added a personal favourite; and that is to push for Risk Based Testing (a good presentation from Hans Schaefer: http://www.cs.tut.fi/tapahtumat/testaus04/schaefer.pdf on the subject) so that we have a basis for prioritisation when to comes to test planning.

So then I was back to wondering about how to I write ‘Thinking’ as a valid strategy. And that is where I got stuck; I dressed it up as a ‘conscious decision about the best approach to testing in any given situation’ but it still seemed a cop out to me. Which is when I downloaded the latest copy of Better Software (Nov/Dec 2014, Techwell) and read the article from Jon Hager “How to design a test strategy” which was very enjoyable as he had found a good way to say ‘our strategy is to think about it’ and then goes on to list a number of tactics that can be successful, on their own or combined; and there are plenty more methods and techniques that can be added like Session Based Testing.

This means that my strategy has suddenly become a waste of time as I could have summed up the whole thing by saying ‘read Jon Hager’s article’. But then I have to write it in Swedish. So here we have it, my test strategy:

För att förbättra kvaliteten i våra leveranser genom testning måste vi alltid ha:

- Riskanalys (med riktlinjer för hur det kan gå till)

- Medvetet vägval (med checklista på olika metoder som kan appliceras)

- Acceptanskriterier (med riktlinjer för hur det kan gå till)

För att stödja testning efter att koden har lämnat utvecklarens arbetsplats måste vi ha:

- Enhetstestning utförd av utvecklaren

- En testmiljö på plats och under kontroll av testaren

- Ett verktyg för hantering av defekter och testfall (med riktlinjer för hur det kan konfigureras)

- En checklista för testplanering

And I even fulfilled my personal goal of making it short and sweet, now just to make it consumer friendly.

Leave a Reply

You must be logged in to post a comment.